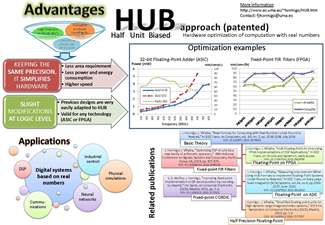

Half Unit Biased Approach

It optimizes the hardware implementation of computation with real numbers

To Start

- HUB approach in a nutshell

(clik on the image to enlarge it)

(clik on the image to enlarge it)- Brief overview to HUB approach in a 5-minutes video

wmv (15 MB)

mp4 (5 MB)

mp4 (5 MB)

- Basic technical introduction to how HUB works

wmv (33 MB)

mp4 (14 MB)

mp4 (14 MB)

Reaseach Publications

- [1] J. Villalba, J. Hormigo and S. Gonzalez Navarro, "Unbiased Rounding for HUB Floating-point Addition," in IEEE Transactions on Computers, vol. PP, no. 99, pp. 1-1. doi: 10.1109/TC.2018.2807429 (author PDF)

URL: http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=8300633&isnumber=4358213

- [2] J. Villalba-Moreno, "Digit Recurrence Floating-Point Division under HUB Format," 2016 IEEE 23nd Symposium on Computer Arithmetic (ARITH), Santa Clara, CA, 2016, pp. 79-86. doi: 10.1109/ARITH.2016.17 (author PDF)

URL: http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=7563275&isnumber=7563255

- [3] J. Villalba-Moreno and J. Hormigo, "Floating Point Square Root under HUB Format," 2017 IEEE International Conference on Computer Design (ICCD), Boston, MA, 2017, pp. 447-454. doi: 10.1109/ICCD.2017.79 (author PDF)

URL: http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=8119252&isnumber=8119172

- [4] J. Hormigo and J. Villalba, "Measuring Improvement When

Using HUB Formats to Implement Floating-Point Systems Under Round-to-Nearest,"

in IEEE Transactions on Very Large Scale Integration (VLSI) Systems, vol. 24, no.

6, pp. 2369-2377, June 2016. doi: 10.1109/TVLSI.2015.2502318 (author PDF)

URL: http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=7349231&isnumber=7476004

- [5] J. Hormigo; J. Villalba, "HUB-Floating-Point for improving FPGA implementations of DSP Applications," in IEEE Transactions on Circuits and Systems II: Express Briefs , vol.64, no.3, pp.319-323. doi: 10.1109/TCSII.2016.2563798 (author PDF)

URL: http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=7465754&isnumber=4358609

- [6] J. Hormigo and J. Villalba, "New Formats for Computing with Real-Numbers under Round-to-Nearest," in IEEE Transactions on Computers, vol. 65, no. 7, pp. 2158-2168, July 1 2016. doi: 10.1109/TC.2015.2479623 (author PDF)

URL: http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=7270998&isnumber=7486166

- [7] S. D. Muñoz and J. Hormigo, "Improving fixed-point implementation of QR decomposition by rounding-to-nearest," 2015 International Symposium on Consumer Electronics (ISCE), Madrid, 2015, pp. 1-2. doi: 10.1109/ISCE.2015.7177822 (author PDF)

- [8] J. Hormigo and J. Villalba, "Simplified floating-point units for high dynamic range image and video systems," 2015 International Symposium on Consumer Electronics (ISCE), Madrid, 2015, pp. 1-2. doi: 10.1109/ISCE.2015.7177797 (author PDF)

- [9] J. Hormigo and J. Villalba, "Optimizing DSP circuits by a new family of arithmetic operators," 2014 48th Asilomar Conference on Signals, Systems and Computers, Pacific Grove, CA, 2014, pp. 871-875. doi: 10.1109/ACSSC.2014.7094576 (author PDF)

URL: http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=7094576&isnumber=7094373

Patents

Spanish Patent Granted· Hormigo, J.; Villalba, J. Multiplicadores coma flotante y conversores asociados, ES2546895B2, 2014. (Link)

· Hormigo, J.; Villalba, J. Dispositivos coma flotante y conversores, ES2546898B2, 2014. (Link)

· Hormigo, J.; Villalba, J. Sumadores coma flotante y conversores, ES2546916B2, 2014. (Link)

· Hormigo, J.; Villalba, J. Unidades aritméticas en coma fija y conversores asociados, ES2546915B2, 2014. (Link)

· Hormigo, J.; Villalba, J. Dispositivos para operaciones de multiplicación-suma fusionadas en coma flotante y conversores asociados, ES2546899B2, 2014. (Link)

International patent application (PCT)

- Hormigo, J.; Villalba, ARITHMETIC UNITS AND RELATED CONVERTERS, WO/2015/144950. (link)

PCT 30-month deadline is 28th September 2016.

FAQ

(Please send your own questions to fjhormigo@uma.es)- Isn't HUB approach a kind of "rounding by injection"?

Not at all. "Rounding by injection" is a technique to simplify rounding which produces a conventional number rounded to the nearest. However, HUB is new format with the characteristic that when a value is truncated to generate a HUB number, this number is the nearest to the original value in HUB format. It doesn't matter where this original value come from (an operation with either conventional numbers or HUB numbers, or even just an input number).

When injection is applied, the exact results is shifted by an error (injected). Then, this shifted result is rounded to obtain a conventional number by truncation. The introduced error produces that truncation generates rounding. However, when under HUB approach, the ILBS is explicitly appended to the input values, no error is introduced, it is the same value but now represented in conventional format. Then, It could ve operate in a conventional way( because, it is a conventinoal number), and the intermediate value obtained is exact. When rounding is required, a HUB number rounded to the nearest is obtained at the output by truncation. In this case, the targeted new format (HUB) is what produces the rounding by truncation.

- Could I use HUB internaly if my design has conventional inputs/outputs?

Yes, of course. Conversion to HUB at the input and rounding could be fused in one operation without additional error, and similarly, conversion from HUB to conventional at the output.

The conventional input values could be regularly operated and only when a rounding is required, a truncation is used to produce a HUB number rounded-to-nearest. Similarly, the last intermediate results (which is a conventional value although it came from an operation with HUB numbers), could be rounded in a conventional way to generate the output in conventional format. Using this procedure, no extra rounding error is introduced by conversions.

- Does HUB format provide exactly the same results as IEEE format?

No, it doesn't, but it provides the same precision. This means that, although the value of the result is different, the bound and other statistical properties of the rounding errors are the same. This point has been proved theoretically and experimentally as it is shown in references [4],[5] and [6].

- A simple case which appears to cause frequent errors in

HUB format is when a number can be exactly represented by IEEE format such as 1

or 0.5, but HUB format will always cause a small error due to ILSB. In

applications where most numbers are in the form of 2^(-n), HUB will accumulate

many small errors over repeated computations.

This example does

not prove that HUB format has less precision than IEEE standard.

It is absolutely

true that if a value can be exactly represented by IEEE format (that is an

ERN), HUB will cause an error which is actually the higher possible, 0.5 ULP.

Since the ERN of IEEE format has been shifted 0.5 ULP on HUB format by

definition, ERNs on IEEE format will be represented with maximum error under

HUB format.

However, that does

not mean that HUB format is less accurate than IEEE format since that also works

the other way around, i.e., the ERNs under HUB format will be represented with the

maximum error (0.5 ULP) under IEEE format. For single precision, the values in

the form 2^(-n)+2^(-n-24) are represented under IEEE standard with an error of

0.5 ULP whereas they are exactly represented under HUB format. Indeed, as it is

said in the paper, both errors are always complementary, i.e.,|eIEEE|+|eHUB|=0.5

ULP. Therefore, the better a value is represented under one format, the worst it

is represented under the other.

I cannot imagine any

floating-point application where values in the form 2^(-n) appear more likely

than values in the form 2^(-n)+2^(-n-24). However, there are lots of

applications where the probability to have numbers better represented under

IEEE is the same that the probability to

have numbers better represented under HUB format, such as, DSP, physics

simulation, neural networks, computer graphics…

- How "traditional" FP numbers can be converted to the HUB format and back?

Conversions between HUB-FP and conventional FP numbers is addressed in references [4]. In both cases this conversion implies rounding, but no explicit operation is required for tie-to-away or just forcing the value of the LSB for tie-to-even-like rounding.

- Can HUB approach produce unbiased rounding similar to tie-to-even?

Yes, It can.Actually, the tie case is not possible rounding a HUB number and no sticky bit computation is required in floating-point computation. However, under certain circumstances the intermediate result of an FP addition may be the tie case, but some simple hardware can be utilized to guarantee an unbiased rounded results. The general theory of unbiased rounding is explained in [7] and the unbiased rounding for conversion between HUB and conventional FP is explained in [4].